Organizations aspire to make data-informed decisions. But can they confidently rely on their data? What does that data really tell them, and how was it derived? Paradata, a specialized form of metadata, can provide answers.

Many disciplines use paradata

You won’t find the word paradata in a household dictionary and the concept is unknown in the content profession. Yet paradata is highly relevant to content work. It provides context showing how the activities of writers, designers, and readers can influence each other.

Paradata provides a unique and missing perspective. A forthcoming book on paradata defines it as “data on the making and processing of data.” Paradata extends beyond basic metadata — “data about data.” It introduces the dimensions of time and events. It considers the how (process) and the what (analytics).

Think of content as a special kind of data that has a purpose and a human audience. Content paradata can be defined as data on the making and processing of content.

Paradata can answer:

Where did this content come from?

How has it changed?

How is it being used?

Paradata differs from other kinds of metadata in its focus on the interaction of actors (people and software) with information. It provides context that helps planners, designers, and developers interpret how content is working.

Paradata traces activity during various phases of the content lifecycle: how it was assembled, interacted with, and subsequently used. It can explain content from different perspectives:

Retrospectively

Contemporaneously

Predictively

Paradata provides insights into processes by highlighting the transformation of resources in a pipeline or workflow. By recording the changes, it becomes possible to reproduce those changes. Paradata can provide the basis for generalizing the development of a single work into a reusable workflow for similar works.

Some discussions of paradata refer to it as “processual meta-level information on processes“ (processual here refers to the process of developing processes.) Knowing how activities happen provides the foundation for sound governance.

Contextual information facilities reuse. Paradata can enable the cross-use and reuse of digital resources. A key challenge for reusing any content created by others is understanding its origins and purpose. It’s especially challenging when wanting to encourage collaborative reuse across job roles or disciplines. One study of the benefits of paradata notes: “Meticulous documentation and communication of contextual information are exceedingly critical when (re)users come from diverse disciplinary backgrounds and lack a shared tacit understanding of the priorities and usual practices of obtaining and processing data.“

While paradata isn’t currently utilized in mainstream content work, a number of content-adjacent fields use paradata, pointing to potential opportunities for content developers.

Content professionals can learn from how paradata is used in:

Survey and research data

Learning resources

AI

API-delivered software

Each discipline looks at paradata through different lenses and emphasizes distinct phases of the content or data lifecycle. Some emphasize content assembly, while others emphasize content usage. Some emphasize both, building a feedback loop.

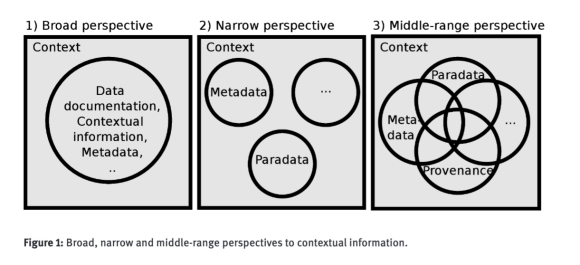

Different perspectives of paradata. Source: Isto Huvila

Content professionals should learn from other disciplines, but they should not expect others to talk about paradata in the same way. Paradata concepts are sometimes discussed using other terms, such as software observability.

Paradata for surveys and research data

Paradata is most closely associated with developing research data, especially statistical data from surveys. Survey researchers pioneered the field of paradata several decades ago, aware of the sensitivity of survey results to the conditions under which they are administered.

The National Institute of Statistical Sciences describes paradata as “data about the process of survey production” and as “formalized data on methodologies, processes and quality associated with the production and assembly of statistical data.”

Researchers realize how information is assembled can influence what can be concluded from it. In a survey, confounding factors could be a glitch in a form or a leading question that prompts people to answer in a given way disproportionately.

The US Census Bureau, which conducts a range of surveys of individuals and businesses, explains: “Paradata is a term used to describe data generated as a by-product of the data collection process. Types of paradata vary from contact attempt history records for interviewer-assisted operations, to form tracing using tracking numbers in mail surveys, to keystroke or mouse-click history for internet self-response surveys.” For example, the Census Bureau uses paradata to understand and adjust for non-responses to surveys.

Source: NDDI

As computers become more prominent in the administration of surveys, they become actors influencing the process. Computers can record an array of interactions between people and software.

Why should content professionals care about survey processes?

Think about surveys as a structured approach to assembling information about a topic of interest. Paradata can indicate whether users could submit survey answers and under what conditions people were most likely to respond. Researchers use paradata to measure user burden. Paradata helps illuminate the work required to provide information –a topic relevant to content professionals interested in the authoring experience of structured content.

Paradata supports research of all kinds, including UX research. It’s used in archaeology and archives to describe the process of acquiring and preserving assets and changes that may happen to them through their handling. It’s also used in experimental data in the life sciences.

Paradata supports reuse. It provides information about the context in which information was developed, improving its quality, utility, and reusability.

Researchers in many fields are embracing what is known as the FAIR principles: making data Findable, Accessible, Interoperable, and Reusable. Scientists want the ability to reproduce the results of previous research and build upon new knowledge. Paradata supports the goals of FAIR data. As one study notes, “understanding and documentation of the contexts of creation, curation and use of research data…make it useful and usable for researchers and other potential users in the future.”

Content developers similarly should aspire to make their content findable, accessible, interoperable, and reusable for the benefit of others.

Paradata for learning resources

Learning resources are specialized content that needs to adapt to different learners and goals. How resources are used and changed influences the outcomes they achieve. Some education researchers have described paradata as “learning resource analytics.”

Paradata for instructional resources is linked to learning goals. “Paradata is generated through user processes of searching for content, identifying interest for subsequent use, correlating resources to specific learning goals or standards, and integrating content into educational practices,” notes a Wikipedia article.

Data about usage isn’t represented in traditional metadata. A document prepared for the US Department of Education notes: “Say you want to share the fact that some people clicked on a link on my website that leads to a page describing the book. A verb for that is ‘click.’ You may want to indicate that some people bookmarked a video for a class on literature classics. A verb for that is ‘bookmark.’ In the prior example, a teacher presented resources to a class. The verb used for that is ‘taught.’ Traditional metadata has no mechanism for communicating these kinds of things.”

“Paradata may include individual or aggregate user interactions such as viewing, downloading, sharing to other users, favoriting, and embedding reusable content into derivative works, as well as contextualizing activities such as aligning content to educational standards, adding tags, and incorporating resources into curriculum.”

Usage data can inform content development. One article expresses the desire to “establish return feedback loops of data created by the activities of communities around that content—a type of data we have defined as paradata, adapting the term from its application in the social sciences.”

Unlike traditional web analytics, which focuses on web pages or user sessions and doesn’t consider the user context, paradata focuses on the user’s interactions in a content ecosystem over time. The data is linked to content assets to understand their use. It resembles social media metadata that tracks the propagation of events as a graph.

“Paradata provides a mechanism to openly exchange information about how resources are discovered, assessed for utility, and integrated into the processes of designing learning experiences. Each of the individual and collective actions that are the hallmarks of today’s workflow around digital content—favoriting, foldering, rating, sharing, remixing, embedding, and embellishing—are points of paradata that can serve as indicators about resource utility and emerging practices.”

Paradata for learning resources utilizes the Activity Stream JSON, which can track the interaction between actors and objects according to predefined verbs called an “Activity Schema” that can be measured. The approach can be applied to any kind of content.

Paradata for AI

AI has a growing influence over content development and distribution. Paradata is emerging as a strategy for producing “explainable AI” (XAI). “Explainability, in the context of decision-making in software systems, refers to the ability to provide clear and understandable reasons behind the decisions, recommendations, and predictions made by the software.”

The Association for Intelligent Information Management (AIIM) has suggested that a “cohesive package of paradata may be used to document and explain AI applications employed by an individual or organization.”

Paradata provides a manifest of the AI training data. AIIM identifies two kinds of paradata: technical and organizational.

Technical paradata includes:

The model’s training dataset

Versioning information

Evaluation and performance metrics

Logs generated

Existing documentation provided by a vendor

Organizational paradata includes:

Design, procurement, or implementation processes

Relevant AI policy

Ethical reviews conducted

Source: Patricia C. Franks

The provenance of AI models and their training has become a governance issue as more organizations use machine learning models and LLMs to develop and deliver content. AI models tend to be ” black boxes” that users are unable to untangle and understand.

How AI models are constructed has governance implications, given their potential to be biased or contain unlicensed copyrighted or other proprietary data. Developing paradata for AI models will be essential if models expect wide adoption.

Paradata and document observability

Observing the unfolding of behavior helps to debug problems to make systems more resilient.

Fabrizio Ferri-Benedetti, whom I met some years ago in Barcelona at a Confab conference, recently wrote about a concept he calls “document observability” that has parallels to paradata.

Content practices can borrow from software practices. As software becomes more API-focused, firms are monitoring API logs and metrics to understand how various routines interact, a field called observability. The goal is to identify and understand unanticipated occurrences. “Debugging with observability is about preserving as much of the context around any given request as possible, so that you can reconstruct the environment and circumstances that triggered the bug.”

Observability utilizes a profile called MELT: Metrics, Events, Logs, and Traces. MELT is essentially paradata for APIs.

Software observability pattern. Source: Karumuri, Solleza, Zdonik, and Tatbul

Content, like software, is becoming more API-enabled. Content can be tapped from different sources and fetched interactively. The interaction of content pieces in a dynamic context showcases the content’s temporal properties.

When things behave unexpectedly, systems designers need the ability to reverse engine behavior. An article in IEEE Software states: “One of the principles for tackling a complex system, such as a biochemical reaction system, is to obtain observability. Observability means the ability to reconstruct a system’s internal state from its outputs.”

Ferri-Benedetti notes, “Software observability, or o11y, has many different definitions, but they all emphasize collecting data about the internal states of software components to troubleshoot issues with little prior knowledge.”

Because documentation is essential to the software’s operation, Ferri-Benedetti advocates treating “the docs as if they were a technical feature of the product,” where the content is “linked to the product by means of deep linking, session tracking, tracking codes, or similar mechanisms.”

He describes document observability (“do11y”) as “a frame of mind that informs the way you’ll approach the design of content and connected systems, and how you’ll measure success.”

In contrast to observability, which relies on incident-based indexing, paradata is generally defined by a formal schema. A schema allows stakeholders to manage and change the system instead of merely reacting to it and fixing its bugs.

Applications of paradata to content operations and strategy

Why a new concept most people have never heard of? Content professionals must expand their toolkit.

Content is becoming more complex. It touches many actors: employees in various roles, customers with multiple needs, and IT systems with different responsibilities. Stakeholders need to understand the content’s intended purpose and use in practice and if those orientations diverge. Do people need to adapt content because the original does not meet their needs? Should people be adapting existing content, or should that content be easier to reuse in its original form?

Content continuously evolves and changes shape, acquiring emergent properties. People and AI customize, repurpose, and transform content, making it more challenging to know how these variations affect outcomes. Content decisions involve more people over extended time frames.

Content professionals need better tools and metrics to understand how content behaves as a system.

Paradata provides contextual data about the content’s trajectory. It builds on two kinds of metadata that connect content to user action:

Administrative metadata capturing the actions of the content creators, such as author, intended audience, approver, version, and when last updated

Usage metadata capturing the intended and actual uses of the content, both internal (asset role, rights, where item or assets are used) and external (number of views, average user rating)

Paradata also incorporates newer forms of semantic and blockchain-based metadata that address change over time:

Provenance metadata

Actions schema types

Provenance metadata has become essential for image content, which can be edited and transformed in multiple ways that change what it represents. Organizations need to know the source of the original and what edits have been made to it, especially with the rise of synthetic media. Metadata can indicate on what an image was based or derived from, who made changes, or what software generated changes. Two corporate initiatives focused on provenance metadata are the Content Authenticity Initiative and the Coalition for Content Provenance and Authenticity.

Actions are an established — but underutilized — dimension of metadata. The widely adopted schema.org vocabulary has a class of actions that address both software interactions and physical world actions. The schema.org actions build on the W3C Activity Streams standard, which was upgraded in version 2.0 to semantic standards based on JSON-LD types.

Content paradata can clarify common issues such as:

How can content pieces be reused?

What was the process for creating the content, and can one reuse that process to create something similar?

When and how was this content modified?

Paradata can help overcome operational challenges such as:

Content inventories where it is difficult to distinguish similar items or versions

Content workflows where it is difficult to model how distinct content types should be managed

Content analytics, where the performance of content items is bound up with channel-specific measurement tools

Implementing content paradata must be guided by a vision. The most mature application of paradata – for survey research – has evolved over several decades, prompted by the need to improve survey accuracy. Other research fields are adopting paradata practices as research funders insist that data be “FAIR.” Change is possible, but it doesn’t happen overnight. It requires having a clear objective.

It may seem unlikely that content publishing will embrace paradata anytime soon. However, the explosive growth of AI-generated content may provide the catalyst for introducing paradata elements into content practices. The unmanaged generation of content will be a problem too big to ignore.

The good news is that online content publishing can take advantage of existing metadata standards and frameworks that provide paradata. What’s needed is to incorporate these elements into content models that manage internal systems and external platforms.

Online publishers should introduce paradata into systems they directly manage, such as their digital asset management system or customer portals and apps. Because paradata can encompass a wide range of actions and behaviors, it is best to prioritize tracking actions that are difficult to discern but likely to have long-term consequences.

Paradata can provide robust signals to reveal how content modifications impact an organization’s employees and customers.

– Michael Andrews

The post Paradata: where analytics meets governance appeared first on Story Needle.